etcd in Kubernetes

What is etcd?

etcd is an open-source, distributed key-value storage system that facilitates the configuration of resources, the discovery of services, and the coordination of distributed systems such as clusters and containers. Its functionalities include distributing and scheduling work across multiple hosts, enabling automatic updates that are safer, and setting up overlay networking for containers. etcd is designed to maintain redundancy and resilience in cloud systems and is the standard storage system used in Kubernetes.

Key concepts and components of etcd

etcd is a highly consistent key-value data store. Data is stored in the form of hierarchical directories forming a standard file system. In practice, the keys likely refer to the resources, and the value refers to the state. The changes in the resources and their state are reflected in etcd which facilitates operations such as scheduling tasks.

etcd is consistent due to the Raft algorithm. According to this, if there are several nodes in the system, a leader node is selected through a vote. To elect a leader node, a majority of the nodes must vote for the specific node as the leader.

The leader acts as the single source of truth. Whenever a read or write request is made to any node in a distributed system, the request is forwarded to the leader. The leader, which holds all the key-value pairs, will handle the request, make the changes in the key-value pair and inform the follower nodes.

Once this information is received, the follower nodes make the corresponding changes to match the leader node. In the event that a leader node fails or faces issues, a new leader is elected by the same algorithm. As long as the majority of the nodes in the system are functional, a new leader can be elected, and requests can be handled.

etcd role in Kubernetes

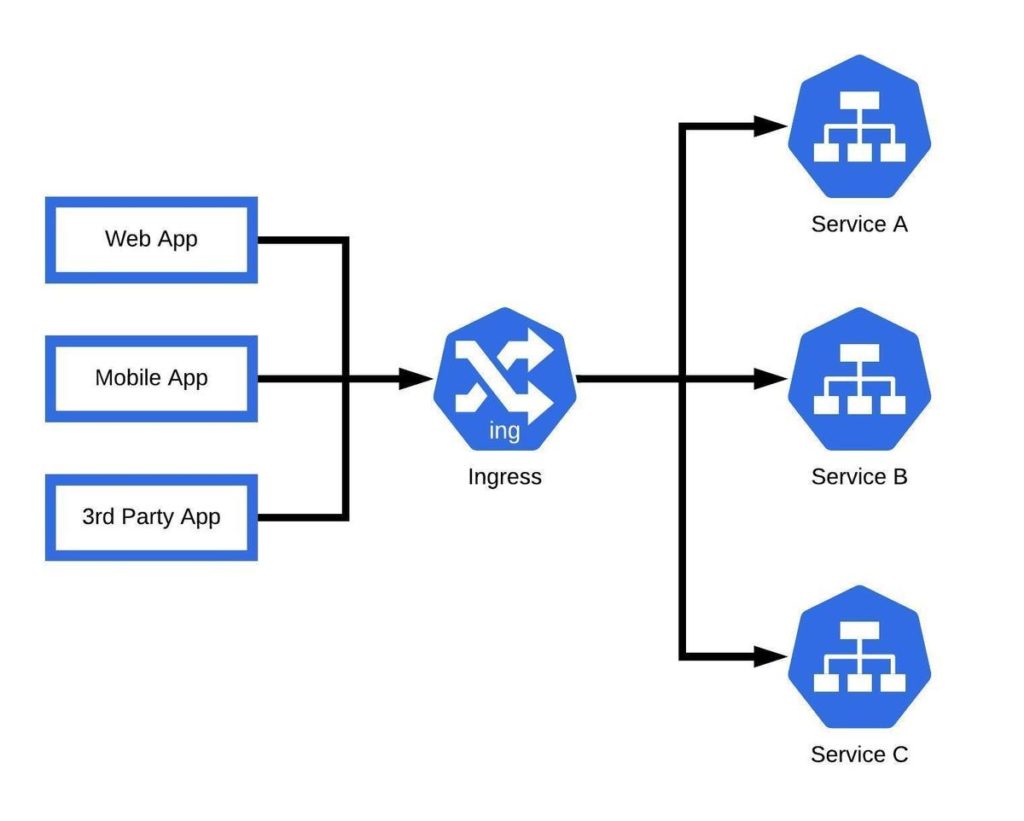

Kubernetes interacts with the etcd through its API server. The etcd stores all cluster information, such as its current state, desired state, configuration of resources, and runtime data. So DevOps can run single-node or multi-node clusters as etcd clusters, which interact with Kubernetes’ etcd API to manage the clusters. As requests are handled by the various nodes in the cluster, the data in etcd changes. Kubernetes taps into this mechanism in the following ways:

- etcd monitors the various nodes and, as a result, sees which resources are free. Based on this, the control plane assigns the task to the relevant resource.

- etcd monitors the health of all nodes at regular intervals. Thus, if any node is overloaded or underused etcd possesses the applicable data. The control plane can either delete the node or reassign the task to another node.

- etcd implements several mechanisms to avoid resource starvation and ensure the availability and reliability of its services.

- etcd’s features such as shared configuration, service discovery, leader election, distributed locks, and the watch API, can help address cross-communication concerns in Kubernetes. This is achieved through better synchronization and interaction among various components and services.

Deployment and configuration of etcd

There are two ways to deploy etcd on a Kubernetes cluster:

etcd on Control Plane Node

Deploying etcd on control plane nodes in a cluster. This is a default deployment mode but unreliable. If the control plane node fails, the whole system will face downtime. Furthermore, if the node is compromised due to a security breach, the complete cluster’s security will be at risk.

etcd on a Dedicated Cluster

Running etcd instances on a dedicated cluster in the Kubernetes environment. Engineers can run single-node clusters for testing purposes, but in real-time, the requests and services are quite complex. Thus, etcd must run on multi-node clusters.

Single-node etcd Cluster

etcd --listen-client-urls=http://$PRIVATE_IP:2379 \ --advertise-client-urls=http://$PRIVATE_IP:2379

- Start the Kubernetes API server with the flag

--etcd-servers=$PRIVATE_IP:2379. - Make sure PRIVATE_IP is set to your etcd client IP.

Multi-node etcd Cluster

etcd --listen-client-urls=http://$IP1:2379,http://$IP2:2379,http://$IP3:2379,http://$IP4:2379,http://$IP5:2379 --advertise-client-urls=http://$IP1:2379,http://$IP2:2379,http://$IP3:2379,http://$IP4:2379,http://$IP5:2379

- Start the Kubernetes API servers with the flag

--etcd-servers=$IP1:2379,$IP2:2379,$IP3:2379,$IP4:2379,$IP5:2379. - Make sure the IP<n> variables are set to your client IP addresses.

Backup and disaster recovery

etcd must be backed up in case the cluster fails or faces a security incident. Numerous strategies serving this purpose include:

- Backing up etcd data using:

- Built-in snapshot

- Volume snapshot

- Snapshot using etcdctl

- Replacing failed etcd member.

- Restoring etcd cluster

Security and authentication

etcd is a highly valuable data source, and DevOps engineers must ensure its security is hardened. A few security measures involve:

- Using Linux firewalls to allow access to nodes that need it.

- Using TLS (Transport Layer Security) to secure communication between the API server and etcd.

- Using valid certificates that ensure communication is carried out with valid certificates.

- Note: Set

--auto-tlsto false to ensure self-assigned certificates are disallowed.

- Note: Set

- Using encryption to protect data at rest.

- Using RBAC authorization for API calls. to limit access to etcd.

- Authenticating API requests.

Use cases and examples of etcd

Cluster coordination and service discovery

etcd provides a centralized and reliable data store for configuration data, coordinating cluster-wide activities, and facilitating service discovery. It allows cluster components to access and update shared configuration settings, ensuring consistency while also serving as a service discovery mechanism.

Running complex services

etcd’s highly consistent storage system ensures that complex services run smoothly in the Kubernetes environment, preventing issues such as downtime and failure to handle requests.

Contributing to security

etcd supports automatic TLS and SSL authentication thus protecting its cluster data from malicious attacks and security breaches which can break down the system.