Kubernetes Cluster

What is a Kubernetes Cluster?

At its core, Kubernetes works by coordinating connected clusters that can work together as a single entity. Kubernetes allows you to deploy containerized apps in clusters without having to assign them to specific machines. For this to work, applications must be architected and packaged without being coupled with individual hosts. That’s where containers come in. Containers are more flexible than virtual machines because they are lighter and only packaged with application dependencies and required services. In Kubernetes, containers don’t have to be tied to individual devices and instead run seamlessly across the cluster. With container-based systems, Kubernetes can automate the distribution and scheduling of application containers across clusters, completely abstracted from the physical or virtual machines the applications run on.

What components make up a Kubernetes cluster?

Kubernetes clusters contain one master node and any number of worker nodes, and can be either physical or virtual machines. The master node is responsible for controlling the state of the cluster and is the origin of task assignments. Worker nodes manage the components that run the applications. Namespaces allow operators to organize multiple clusters into one physical cluster and divide resources amongst different teams. Under the hood, several other components help clusters function:

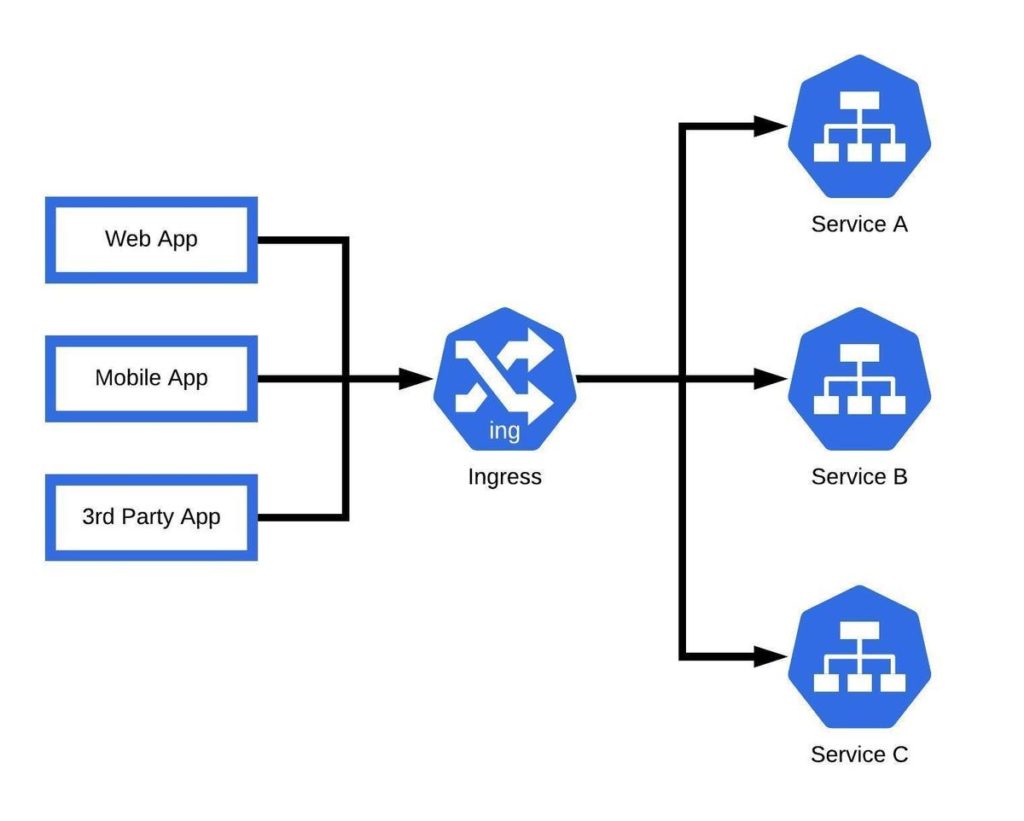

- Scheduler– Assigns containers under defined resource requirements and metrics. When pods have no assigned node, it autonomously selects one for them to run on.

- API Server– Exposes a REST interface to Kubernetes resources, essentially acting as the front end of the Kubernetes control plane.

- Kubelet– Ensures that containers are fully operational within a given pod.

- Kube-proxy– Maintains all network rules across nodes and manages network connectivity across every node in a cluster.

- Controller Manager– Executes controller processes and ensures consistency between the desired state and the actual state. It manages all node controllers, replication controllers, and endpoint controllers.

- etcd– A consistent and highly available store for cluster data.

How to work with a Kubernetes cluster in practice

To work with a Kubernetes cluster, an operator must first define the desired end state. The main elements that define the desired state of a cluster are apps, resources, images, and replica quantity. Manifest files, usually JSON, specify the application type and configure the number of replicas needed to run the system.

Developers can use the kubectl command-line interface or the Kubernetes API to set the parameters that define the desired state in the master node. From there, the master node will automatically communicate the desired state to the worker nodes via the Kubernetes API. Once the desired state is established, Kubernetes will automatically manage clusters through the control plane to ensure that actual state behavior is consistent. The control plane accomplishes this by running continuous loops that guarantee the actual state matches the desired state. If an anomaly is detected, the control plane will take corrective action, such as in the event of a replica crash. The Pod Lifecycle Event Generator provides additional automation that includes container management, validating images, and implementing updates and rollbacks.

Creating a Kubernetes Cluster

Kubernetes clusters can be created and deployed using either physical or virtual machines. Most new users start with Minikube to make their first cluster. Minikube is an open-source tool compatible with Linux, Windows, and Mac Operating Systems (Kubernetes offers a tutorial on creating a cluster with Minikube here). It can be used to develop and deploy a simple cluster consisting of one master node and one worker node. The Minikube command-line interface also provides basic bootstrapping functionality to start, stop and delete the cluster.